Maximizing ROI with Generative AI:

A Strategic Guide for Business Leaders

Generative AI has evolved from a novelty into the core engine of modern business operations. By 2026, it is no longer just about chatbots; it’s about autonomous agents, automated workflows, and high-speed data synthesis. But how does this technology actually work under the hood, and how can your organization leverage it safely?

What is Generative AI in 2026?

Generative AI is a subset of artificial intelligence designed to create original content—ranging from text and code to high-fidelity images and synthetic data. Unlike traditional "Discriminative AI," which merely classifies existing data, Generative AI uses advanced neural networks to recognize underlying patterns and synthesize entirely new outputs that mimic human creativity and logic.

For businesses, this translates to massive gains in scalability, allowing teams to automate complex cognitive tasks that previously required manual intervention.

How Does Generative AI Work? (The LLM Architecture)

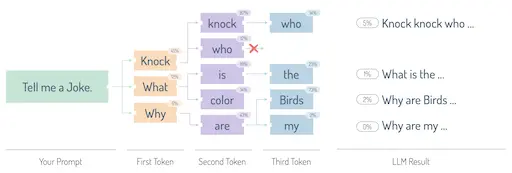

Modern Generative AI is primarily powered by Large Language Models (LLMs). Originally introduced by Google's "Attention is All You Need" paper, these models operate as sophisticated probabilistic engines. When you provide a prompt, the model calculates the probability of the next "token" (word or fragment) based on the trillions of data points it processed during training. It doesn't "know" facts in the human sense; it predicts the most statistically logical continuation of your thought.

Transformers and Context Windows: The AI’s "Brain"

The Transformer architecture is what allows AI to understand context. Unlike older models that read text linearly, Transformers use "Attention Mechanisms" to look at an entire document simultaneously.

For CTOs, the most critical concept today is the Context Window. This determines how much information the AI can "keep in mind" during a conversation. Modern models now support massive windows, allowing you to upload entire technical documentations or codebases for the AI to analyze without losing track of the initial instructions. This is where Prompt Engineering becomes a high-leverage skill—structuring your input to guide the model’s focus.

To maximize your output quality, use our ChatGPT Prompt Optimizer to refine your instructions for enterprise-grade results.

The Enterprise Training Pipeline

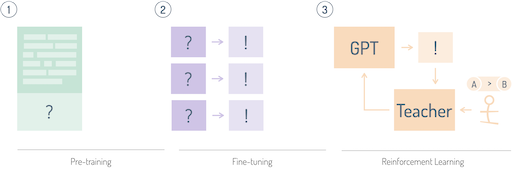

Building a production-ready model like GPT-4o or Claude 3.5/4 involves three sophisticated stages:

- Self-Supervised Pre-training: The model "reads" the open web and private datasets to learn the structure of language, logic, and even basic programming.

- Instruction Fine-Tuning: The model is trained on curated pairs of questions and answers. This teaches the AI how to behave as a helpful assistant rather than just a text-completer.

- Preference Alignment (RLHF & DPO): Using techniques like Reinforcement Learning from Human Feedback (RLHF), the model is "polished" by human testers who rank responses. This ensures the AI remains safe, helpful, and aligned with company values.

Auto-Regressive Generation & Sampling

When generating a response, the AI uses Auto-regressive generation—predicting the next token based on all previous tokens in the sequence. To prevent the AI from being too repetitive or "robotic," we use Sampling Techniques (like Top-P and Temperature).

Adjusting the Temperature allows business users to toggle between "Precision" and "Creativity." A low temperature (0.1) is ideal for legal summaries or data extraction, while a high temperature (0.8+) is better for brainstorming marketing slogans or creative writing.

Future-Proofing Your Business: Beyond the Chatbot

In 2026, the real value of Generative AI lies in Retrieval-Augmented Generation (RAG) and AI Agents. RAG allows the AI to "look up" your company’s private, real-time data before answering, virtually eliminating hallucinations. Meanwhile, AI Agents can now execute tasks—like booking meetings, updating CRMs, or writing and deploying code—autonomously.

Implementing these technologies isn't just about efficiency; it's about building a scalable, data-driven moat around your business. Understanding these fundamentals ensures you can lead your organization through the AI transition with confidence.

The future of work isn't just assisted by AI—it's accelerated by it.